VANK said it has begun a comprehensive analysis of how Africa is portrayed in the digital information environment, pointing to patterns of bias in news coverage, search algorithms and generative artificial intelligence.

The group said the project expands earlier efforts that focused on textbooks, maps and encyclopedias to include the digital channels people now encounter most often, such as online headlines, search results, AI-generated summaries and recommended images.

VANK previously campaigned to correct biased descriptions of Africa in Korean textbooks, prompting government review, and later expanded its efforts to overseas educational materials. The new analysis shifts attention to how everyday digital information shapes public perception.

According to VANK, Africa is still frequently associated with keywords such as poverty, conflict, aid and crisis. While such descriptions may reflect real events, repeated use can reduce the continent to a single image and obscure its diversity and development.

The group said many people form impressions of Africa indirectly through digital platforms rather than direct experience. It therefore examined news reports, generative AI systems and search portal algorithms as key drivers of perception.

The findings showed that news coverage often uses Africa as a benchmark in negative comparisons, with expressions such as “poorer than Africa” appearing in both domestic and international media. VANK warned that repeated use of such comparisons can reinforce stereotypes even when individual comparisons are fact-based.

The organization also reported racial disparities in AI-generated images. Tests conducted with tools including ChatGPT and Gemini produced images of professional occupations such as prosecutors, judges, professors and doctors that were about 74 percent white and only 3 percent Black.

In addition, AI-generated images of Africa tended to depict rural settings, traditional clothing and manual labor, while images of Europe, Asia and the Americas more often showed modern urban life. VANK said the pattern reflects how training data has accumulated around Western societies and how AI reproduces those patterns.

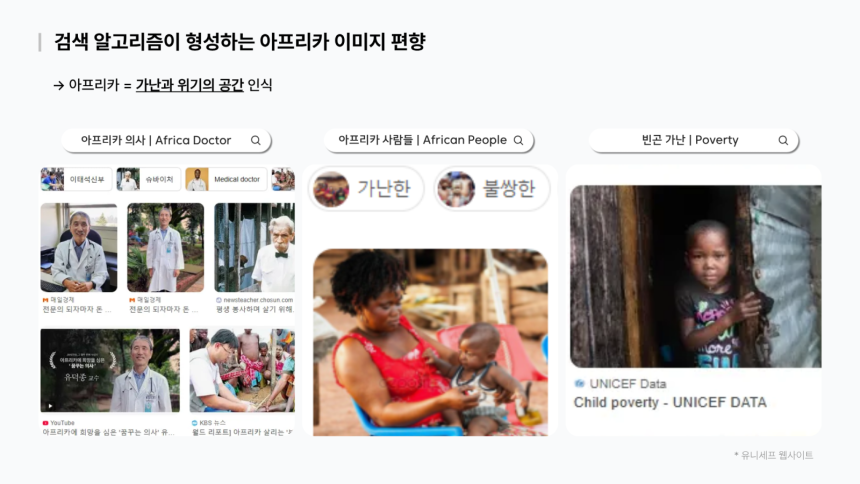

Search results were also found to play a role. Searches for terms such as “African people” on major portals often displayed images associated with poverty or underdevelopment at the top, while images showing modern life and diverse cultural contexts in Africa appeared less frequently.

VANK attributed part of this pattern to the widespread use of fundraising images by international aid groups, saying such materials, though created with good intentions, can keep Africa framed primarily through poverty.

The group said it plans to respond by building datasets that compile examples of biased language and imagery along with alternative expressions and visuals. The materials are intended for use in media, education and AI training environments to help present a more balanced view of Africa.

Director Park Gi-tae said Africa should not be viewed merely through external perspectives but recognized as a region with its own history and narratives. He added that without reliable data reflecting Africans’ voices and everyday lives, distorted images will continue to be reproduced in digital spaces.

VANK said it will continue collecting and providing data and content aimed at improving how Africa is understood in the global information environment.